Policy Gradient Approach

- If we can calculate the gradient of the expected reward with respect to the policy parameters, we can use gradient descent to find the best policy

- Loss function example: REINFORCE (Williams, 1992)

where:

Value-based methods

- Policy gradient approach is direct, but only really works for simple policies

- Value-based methods instead explore the space and learn the value associated with a given action

- Based on Markov Decision Processes (review from AI?)

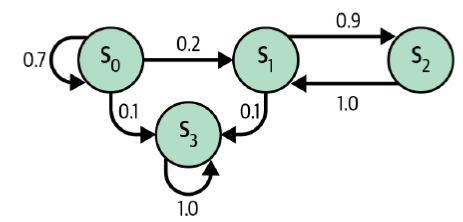

Markov Chains

- A Markov Chain is a model of random states where the future state depends only on the current state (a memoryless process)

- Used to model real-world processes, e.g. Google's PageRank algorithm

Which of these is the terminal state?

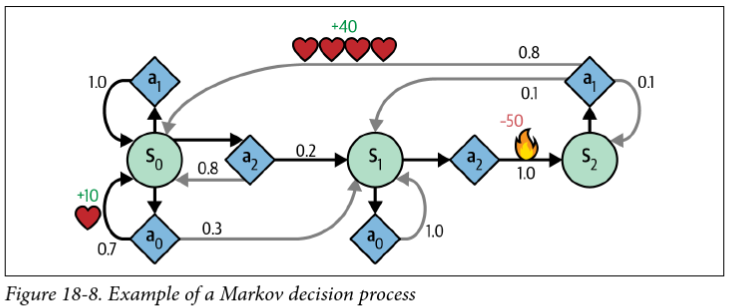

Markov Decision Processes

- Like a Markov Chain, but with actions and rewards

- Bellman optimality equation:

Iterative solution to Bellman's equation

Value Iteration:

- Initialize

- Update

- Repeat until convergence

Problem: we still don't know the optimal policy

Q-Values

Bellman's equation for Q-values (optimal state-action pairs):

Optimal policy

For small spaces, we can use dynamic programming to iteratively solve for

Q-Learning

- Q-Learning is a variation on Q-value iteration that learns the transition probabilities and rewards from experience

- An agent interacts with the environment and keeps track of the estimated Q-values for each state-action pair

- It's also a type of temporal difference learning (TD learning), which is kind of similar to stochastic gradient descent

- Interestingly, Q-learning is "off-policy" because it learns the optimal policy while following a different one (in this case, totally random exploration)

Q-Learning Update rule

-

At each iteration, the Q estimate is updated according to:

-

Where:

Exploration policies

How do you balance short-term rewards, long-term rewards, and exploration?

- Our small example used a purely random policy

- Common to start with high

Challenges with Q-Learning

We just converged on a 3-state problem in 10k iterations. How many states are in something like an Atari game?

How do we handle continuous state spaces?

One approach: Approximate Q-learning:

- The number of parameters

- Turns out that neural networks are great for this!

Deep Q-Networks

- We know states, actions, and observed rewards

- We need to estimate the Q-values for each state-action pair

- Target Q-values:

- Loss function:

- Standard MSE, backpropagation, etc.

Challenges with DQNs

- Catastrophic forgetting: just when it seems to converge, the network forgets what it learned about old states and comes crashing down

- The learning environment keeps changing, which isn't great for gradient descent

- The loss value isn't a good indicator of performance, particularly since we're estimating both the target and the Q-values

- Ultimately, reinforcement learning is inherently unstable!

The last topic: Geneterative AI and ethics

GenAI + Ethics Discussion

- Generative images have gotten really good

- What can we do? What should we do?