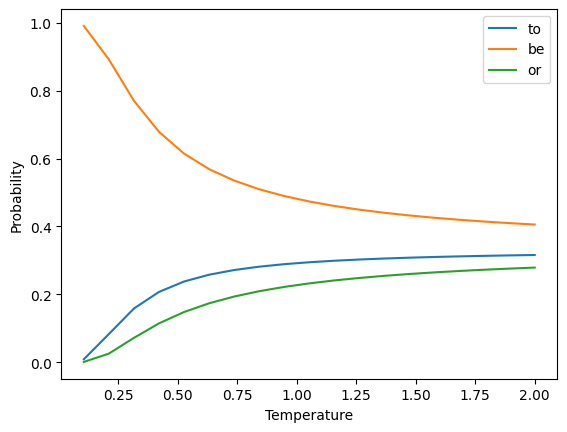

Temperature Example

- Vocab =

["to", "be", "or"] - Assume that the "logits"

- Sample the next word from the resulting distribution

In the beginning, there were

- A simple way to represent text is as a bag of

- unigram: single words (aka "Bag of Words"):

["the", "cat", "sat", "on", "the", "mat"]

- bigram: pairs of words:

["the cat", "cat sat", "sat on", "on the", "the mat"]

- trigram: triples of words:

["the cat sat", "cat sat on", "sat on the", "on the mat"]

Predictive text with

-

Given a sequence of tokens, we can predict the probability of the

-

Each of these conditional probabilities can be estimated from the frequency of the

-

The most likely next word is the one with the highest probability

-

What are some limitations of this approach?

- "The cat sat on the mat"

- "The dog sat on the rug"

- Also subject to the curse of dimensionality

- Vocabulary

- Most

- Vocabulary

Can you think of an

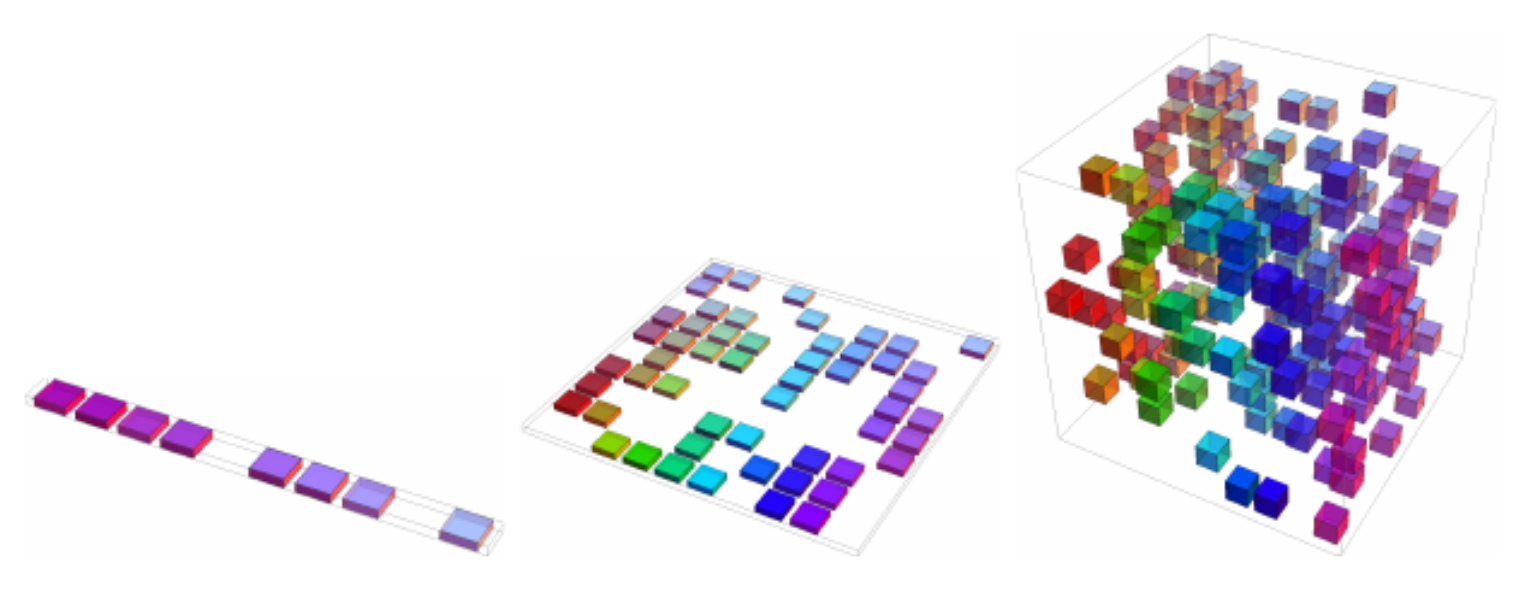

Side note: The curse of dimensionality

- Data is often represented as

- As

- Also called

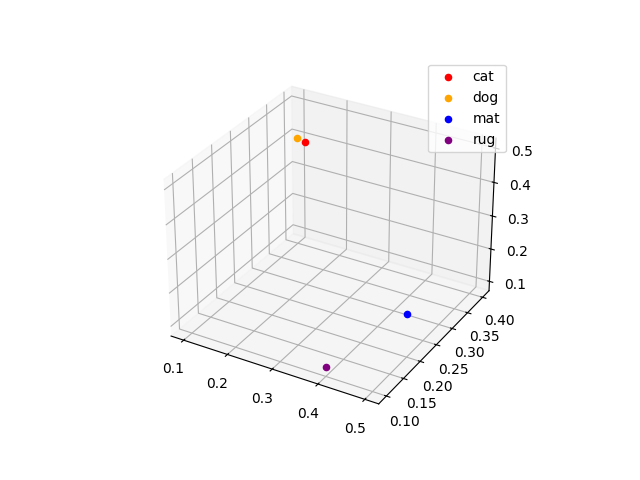

Word embeddings

-

Alternative solution: represent individual words as vectors, or embeddings

-

How are these embeddings defined?

Word Embedding cat [0.2, 0.3, 0.5]dog [0.1, 0.4, 0.4]mat [0.5, 0.2, 0.2]rug [0.4, 0.1, 0.1]

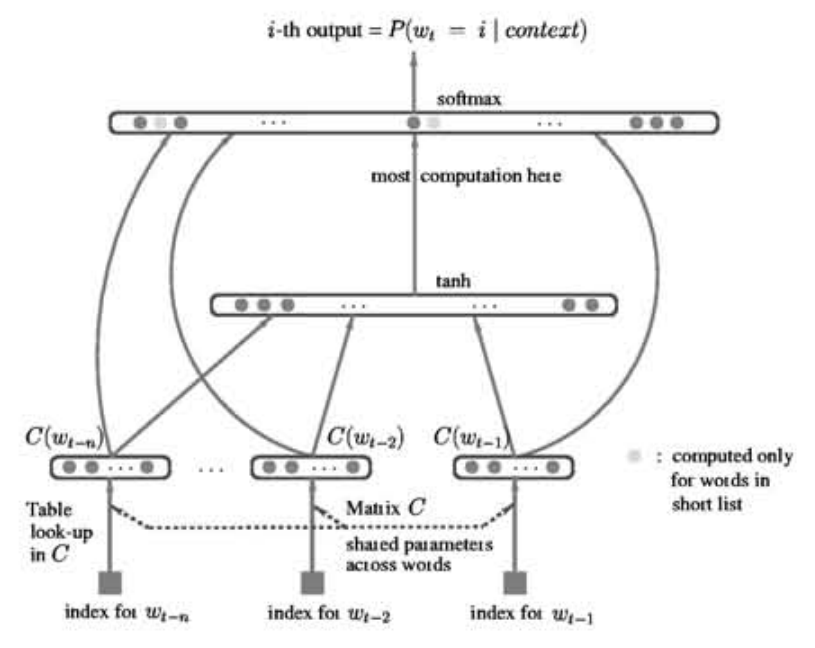

Learning word embeddings

- Wednesday we'll discuss the influential Word2Vec paper, but it wasn't the first time embeddings were learned as part of a network

- The concept was first presented successfully by Bengio in 2001

So we have embeddings, now what?

- We can use these embeddings as input to a neural network

- Applications:

- Sentiment analysis: is a review/tweet/comment positive or negative?

- Named entity recognition: who/what is mentioned in a text?

- Machine translation: convert text from one language to another

- Predictive text: what word comes next?

- Text generation: create new text based on a given input

Sentiment analysis

General process:

- Standardize and tokenize the text

- Add an embedding layer (trainable or pre-trained)

- Add a recurrent layer, such as a GRU

- Add a dense layer with sigmoid activation

To Colab!

This is the process you'll be following for Assignment 3

Sequence to Sequence models

Back to RNNs

- RNNs predict the future based on the past

- This is exactly what we want for predicting stock prices, weather, etc

What about translating a sentence from one language to another?

Time flies like an arrow; fruit flies like a banana.

Can you think of a way to get RNNs to see the future?

Bidirectional RNNs

-

Simple approach: just reverse the sequence

Pretraining

- Embeddings like Word2Vec have been trained on large corpora

- Surely this provides a great starting point for our models!

what are some potential drawbacks?

- ELMo was introduced in 2018 specifically to address the limitations of Word2Vec and GloVe (another popular embedding)

"Our representations differ from traditional word type embeddings in that each token is assigned a representation that is a function of the entire input sentence. We use vectors derived from a bidirectional LSTM that is trained with a coupled language model objective on a large text corpus" -- Peters et al

Subword Tokenization

- Word embeddings are great, but still have limitations

- ELMo uses character tokenization to handle out-of-vocabulary words

- In between characters and words are subwords

"This warm weather is enjoyable""This", "warm", "weath", "er", "is", "enjoy", "able"

- Byte Pair Encoding is the most common subword tokenization method, used by GPT and BERT

What are some advantages of subword tokenization?

Machine Translation

| English | Spanish |

|---|---|

| My mother did nothing but weep | Mi madre no hizo nada sino llorar |

| Croatia is in the southeastern part of Europe | Croacia está en el sudeste de Europa |

| I would prefer an honorable death | Preferiría una muerte honorable |

| I have never eaten a mango before | Nunca he comido un mango |

What kind of challenges can you think of?

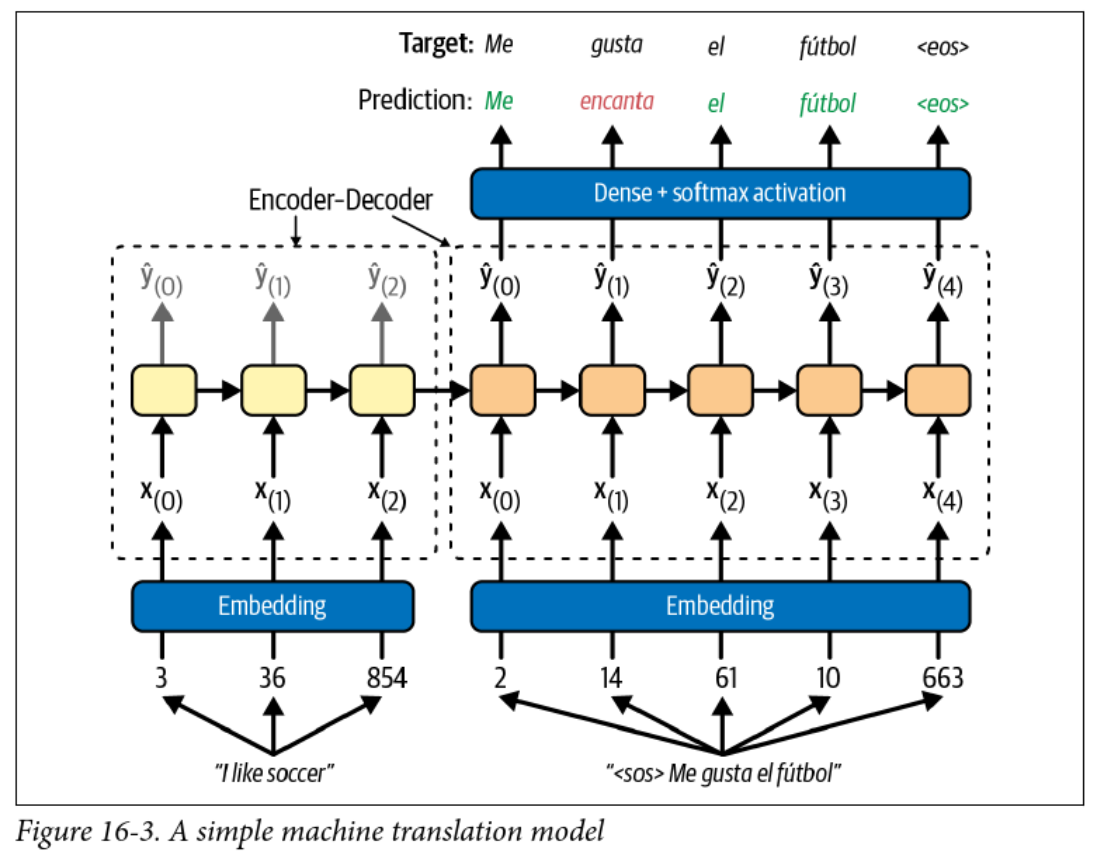

Encoder-Decoder Models

- RNNs can convert an arbitrary length sequence into a fixed length vector

- RNNs can convert a fixed length vector into an arbitrary length sequence

- Why not use two RNNs to convert a sequence to a sequence?

- The output head is a softmax layer with one node for each word in the target vocabulary

Teacher Forcing

- This model uses teacher forcing to train the decoder

- It feels like cheating, but this involves feeding the correct output to the decoder at each time step

- This speeds up training and can improve performance

- Avoids the whole backpropagation through time thing and makes training of RNNs parallelizable

What are the implications at inference time?