Binary case: Bernoulli distribution

- If a random variable

- The expected value of

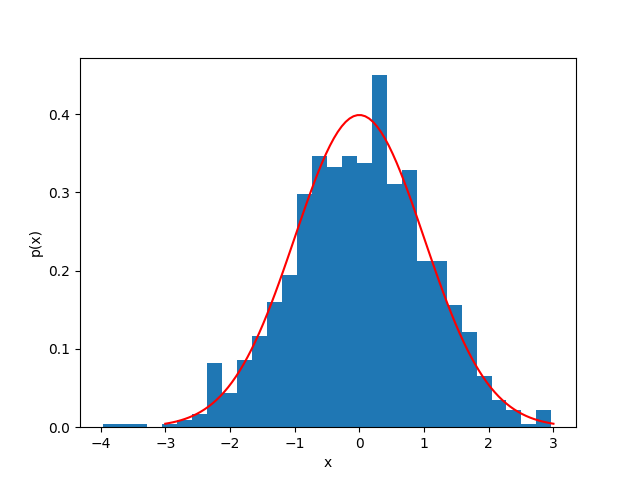

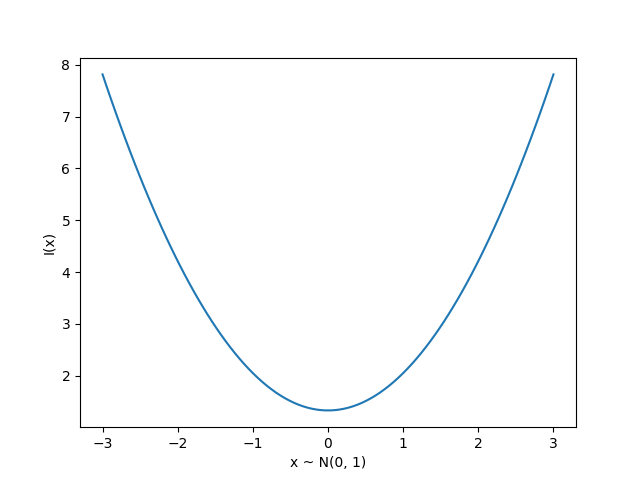

Information theory

Originally developed for message communication, with the intuition that less likely events carry more information, defined for a single event as:

Entropy

- We can measure the expected information of a distribution

- This is called the Shannon entropy

- Measured in bits (base 2) or nats (base

Find the entropy of a bernoulli distribution

Cross-entropy

- The KL divergence is a measure of the extra information needed to encode a message from a true distribution

- The cross-entropy is a simplification that drops the term

- Minimizing the cross-entropy is equivalent to minimizing the KL divergence

- If

Cross-entropy loss

For a true label

where

This is also called log loss or binary cross-entropy

Terminology for evaluation

-

True positive: predicted positive, label was positive (

-

True negative: predicted negative, label was negative (

-

False positive: predicted positive, label was negative (

(type I)

-

False negative: predicted negative, label was positive (

(type II)

-

Accuracy is the fraction of correct predictions, given as:

Precision and recall

-

Precision: Out of all the positive predictions, how many were correct?

-

Recall: Out of all the positive labels, how many were correct?

-

Specificity: Out of all the negative labels, how many were correct?

Confusion matrix

| Predicted Positive | Predicted Negative | |

|---|---|---|

| True Positive | TP | FN |

| True Negative | FP | TN |

- The axes might be reversed, but a good predictor will have strong diagonals

- There's also the F1 score, or harmonic mean of precision and recall:

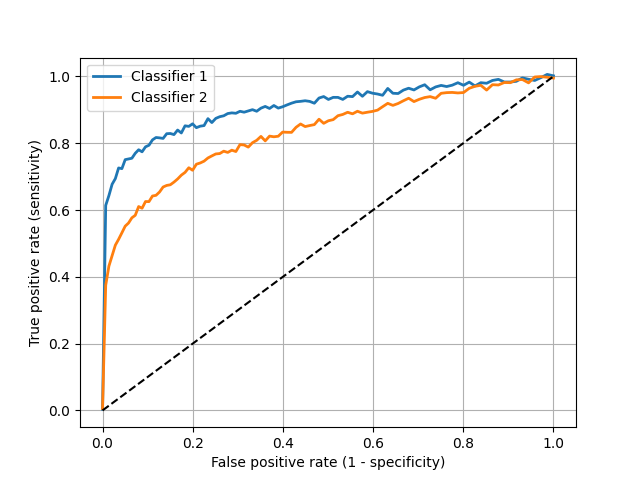

ROC Curves

-

The receiver operating characteristic curve is a plot of the true positive rate (recall or sensitivity) vs. false positive rate (1 - specificity) as the detection threshold changes

-

The diagonal is the same as random guessing

-

A perfect classifier would hug the top left corner

Fun fact: the name comes from WWII radar operators, where true positives were airplanes and false positives were noise

Which classifier is better?

Multiclass case

- For

- The cross-entropy loss is then:

- For a one-hot encoded vector

where

The softmax function

-

For binary classification, the sigmoid function

-

For multiclass classification, the softmax function is used:

where

-

This means that