Consider the 1-d case:

we want the values of

Solving for

Math time!

Solving for

After some algebraic gymnastics, we get:

where

Expanding to matrix form

Instead of the scalar

where each row is an instance (sample) and each column is a feature.

The first column is all ones, representing the bias term

Back to the linear regression problem...

-

We can rewrite the estimate in matrix notation:

-

The MSE can be written as:

where we've used the trick of substituting

Find the gradient of the MSE w.r.t

, set it to zero, and solve for

Properties of matrices and their transpose

The following properties are useful for solving linear algebra problems:

Additionally, any matrix or vector multiplied by

The Normal Equation

We made it! The Normal Equation is again:

- No optimization is required to find the optimal

- Limitations:

- The computational complexity is (at least)

- Even in linear regression problems, it is common to use gradient descent instead due to these limitations

Gradient Descent

The goal of gradient descent is still to minimize the cost function, but it follows an iterative process:

-

Start with a random

-

Calculate the gradient

-

Update

-

Repeat 2-3 until some stopping criterion is met

where

Stochastic Gradient Descent

- Standard or batch gradient descent uses the entire training set to calculate the gradient for each instance at every step

- Stochastic Gradient Descent uses a single random instance at each step:

- Start with a random

- Pick a random instance

- Calculate the gradient

- Update

- Repeat 2-4 until some stopping criterion is met

- Start with a random

Mini-batch Gradient Descent

- Mini-batch gradient descent uses a random subset of the training set

- Less chaotic than stochastic, but faster than batch

- Most common type of gradient descent used in practice

Gradient Descent Hyperparameters

- The learning rate

- No rule that it needs to be constant! A simple learning schedule is to decrease

where - For mini-batch, the batch size is another hyper-parameter

- The number of epochs, or times to process the entire training set

Stopping Criteria

- The simplest stopping criterion is to set a maximum number of epochs

- Early stopping is another option:

- Evaluate on a validation set at regular intervals

- Stop when the validation error starts to increase

- The comparison between training and validation performance can also help prevent overfitting

Loss functions

-

The loss function is the function being minimized by gradient descent

-

MSE is convex and guaranteed to have a single global minimum, but many other loss functions have multiple local minima

-

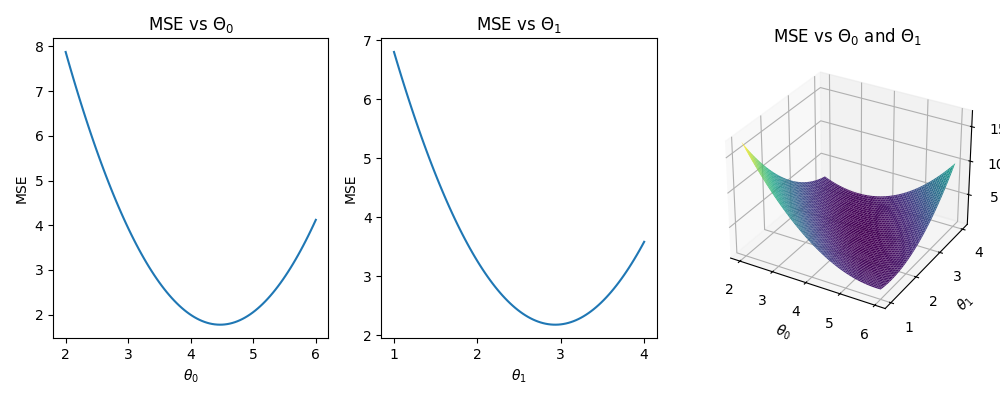

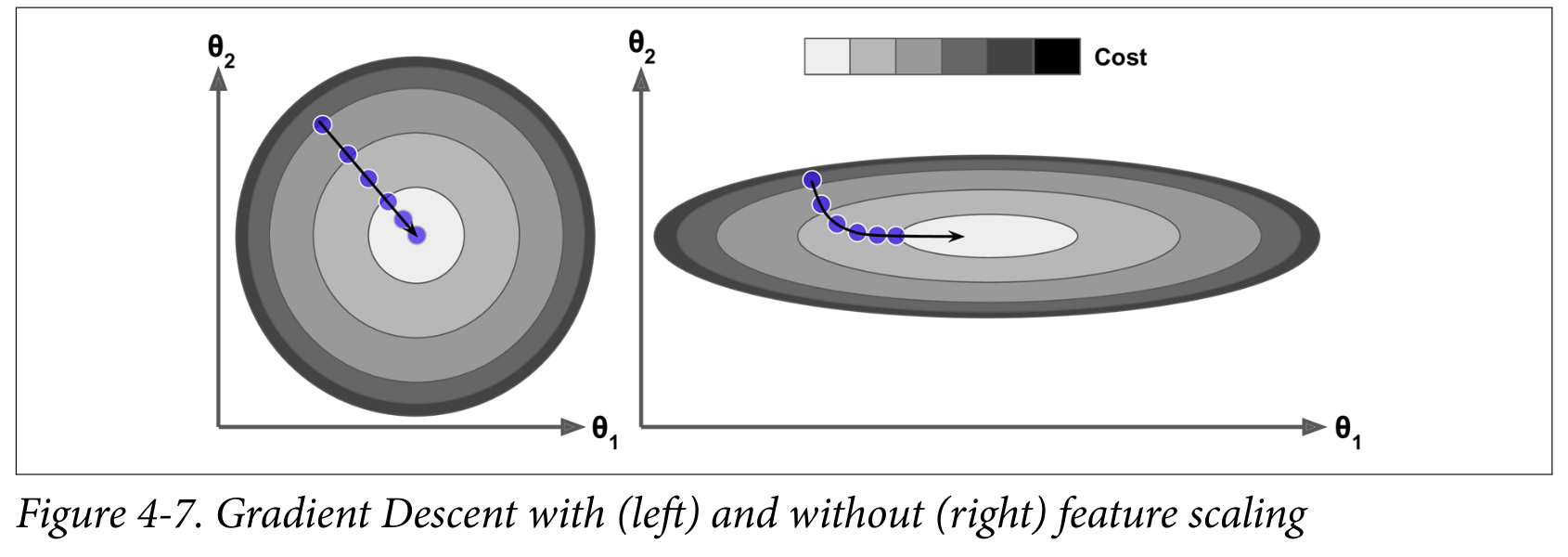

The relative scale of the features can affect the convergence:

Higher-order Polynomials

- Higher order polynomials can be solved with the Normal Equation as well:

- Just include the higher order terms in

- This is still a linear regression problem because the coefficients are linear!

- Risk of overfitting the data

- Easy way to regularize: drop one or more of the higher order terms

Regularization

- If the model fits the training data too well, but doesn't generalize to new data, it is overfitting

- Regularization imposes additional constraints on the weights

- Example: Ridge Regression adds a term to the loss function:

where - The regularization term is only added during training, not evaluation

Note: the term cost function is often used instead of loss function

Logistic regression and beyond

Logistic regression is a binary classifier that uses the logistic function (aka sigmoid function) to map the output to a range of 0 to 1:

We can then minimize the log loss or cross-entropy loss function:

where

The gradient of the log loss ends up being:

- There is no (known) analytical solution this time, but we can still use gradient descent!

- In this case it's still convex, so we don't have to worry about local minima

- In general, for a loss function to work with gradient descent, it must be:

- Continuous and

- Differentiable

- ... at the locations where you evaluate it